I Used to Think UBI Was Stupid

For most of my career, “Universal Basic Income” lived in the same mental bucket as perpetual motion machines and crypto utopias. I’m a DevOps engineer. I live in the land of budgets, blast radius, on-call rotations, and the hard physics of incentives. If something can be gamed, it will be gamed. If something can get cost-optimized into uselessness, it will. So when people pitched UBI as the answer to automation, I didn’t just doubt it, I actively disliked it.

My skepticism wasn’t vague vibes. It was specific, practical, and pretty compelling: ranging from practicality (how can we pay for it) all the way to morality (it will make people lazy).

That was my stance. UBI was a nice thought experiment, and mostly a distraction from the real work.

Then I Spent an Afternoon With Opus 4.5

I recently had a work session that completely changed my mind on UBI.

We’ve been building internal AI infrastructure, and that meant wiring up MCP servers, basically tool and data connectors for models so they can operate inside real systems instead of hallucinating in a vacuum.

I was using Claude Opus 4.5 as my coding and infra copilot via Claude Code.

It wasn’t just “good at code.” It was good at the whole loop. It could read the repo, ask the right clarifying questions, map the dependency graph, propose a plan that didn’t suck, and then actually execute with minimal babysitting. It could generate server scaffolding, handle auth flows, write the docs, produce the deployment manifests, and then spot the places my assumptions were brittle. It felt less like autocomplete and more like pairing with a senior engineer who never gets tired and doesn’t mind trawling logs at 2 a.m.

Halfway through, I caught myself staring at the screen thinking: oh shit, how in the world is “coding” going to be a job in a few years, if it’s already this good, and it keeps improving at this pace?

One Year of Model Progress Now Feels Like Ten

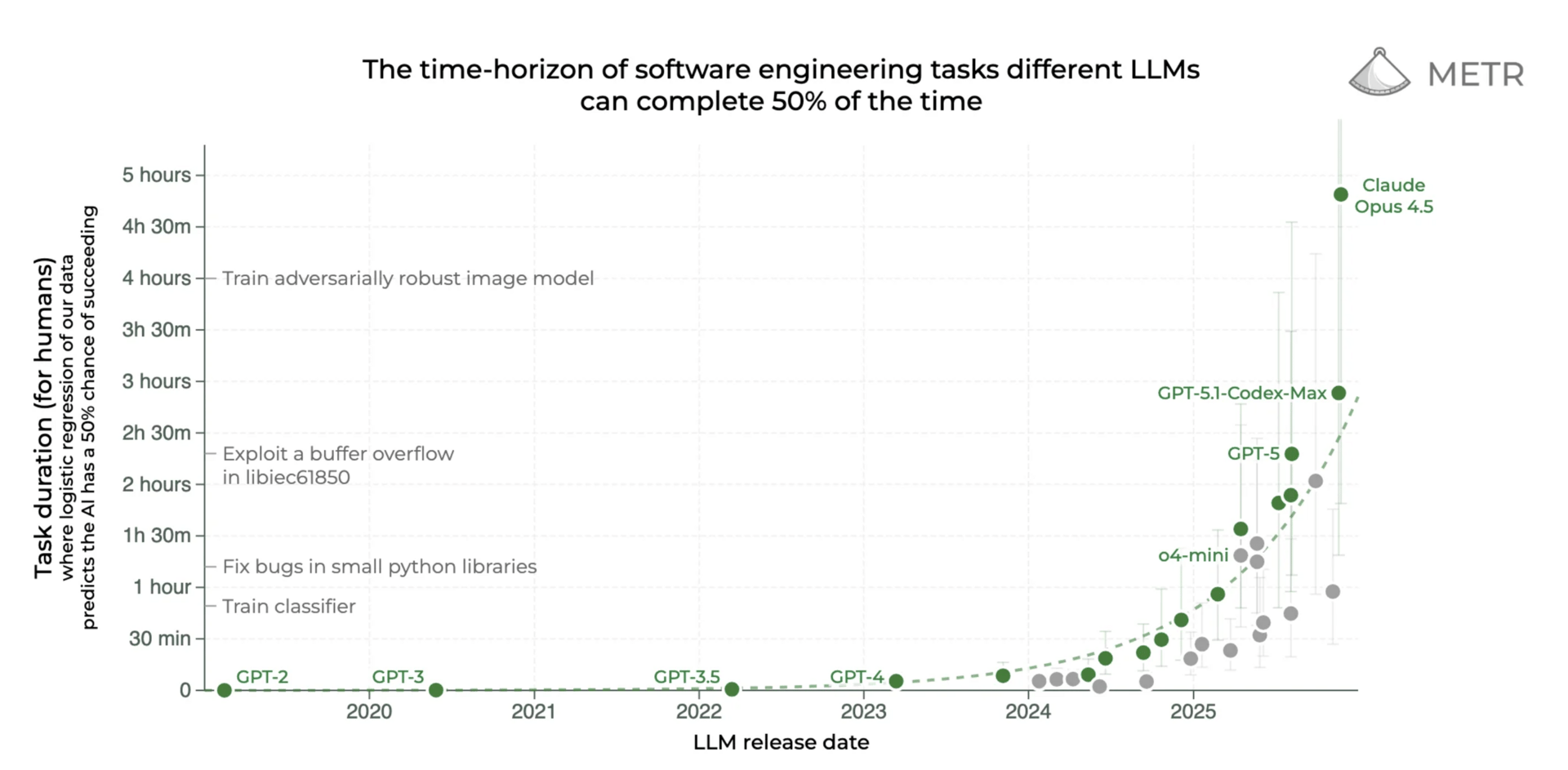

I don’t think people understand how violent the time compression is.

Claude 3.5 Sonnet showed up in mid-2024 and, at the time, it felt like the bar moved. Anthropic marketed it as a big leap in reasoning and coding for a mid-tier model. And yet when I look back from where we are now, that version of “good” feels like it’s from a different era. Not ancient history. Not “the 90s.” More like: last week’s build that you can’t believe ever shipped.

The uncomfortable part is not that models got better. The uncomfortable part is how fast they got better, and how measurable it is. On SWE-bench Verified, Anthropic’s own reported numbers put Opus 4.5 at 80.9% accuracy.

If you want a broader view than vendor charts, Stanford’s AI Index has been tracking how quickly new benchmarks fall. Their 2025 report notes that AI is mastering new benchmarks faster than ever, including enormous jumps on SWE-bench in a single year. And they also note that model scale keeps growing rapidly, with training compute doubling on the order of months, not decades. I can already hear people screaming “BUT IT CAN’T DO THIS.” This might be true at the moment, but I assure you, it will be able to do it within a few years.

This is not “slow and steady.” This is compounding and even hyper-exponential in the case of agentic task duration benchmarks..

The Secret Sauce Is Verifiable Rewards, and That Scales Beyond Code To Virtually All Industries

Here’s the part that really made the doom settle in: the verifiable nature that makes AI increasingly good at coding can easily be applied to other industries. In fact, this is already starting with OpenAI just recently hiring 100 ex-investment bankers helping to automate financial work.

In software, reality is ruthless. The tests pass or they don’t. The build is green or it’s red. The service stays up or it pages you at 3 a.m. Benchmarks like SWE-bench are built on that property, using real repos and unit tests as the scoring mechanism.

And once you can train models using reinforcement learning where the reward is “did it actually work,” you’ve unlocked a general recipe. This is the broader idea behind reinforcement learning with verifiable rewards (RLVR): reward the model when it produces outputs that pass objective checks, like correct solutions in math or passing unit tests in code. There’s active research arguing RLVR can extend reasoning boundaries in both mathematical and coding tasks.

Now take a step back and look at the economy. How much of what we call “knowledge work” is actually verifiable?

Accounting closes or it doesn’t.

A contract is compliant or it isn’t.

A tax filing is accepted or rejected.

A diagnosis matches evidence-based guidelines or it doesn’t.

A supply chain plan hits constraints or fails them.

A marketing funnel converts or it doesn’t.

A security incident gets contained or it escalates.

Even in areas that feel fuzzy, the world is full of scoreboards. And AI companies are building better scoreboards as fast as they’re building better models.

Google DeepMind literally described training loops where verified proofs are used to reinforce models for harder problems, because formal systems let you check correctness mechanically. That is the same underlying dynamic. If you can verify outcomes, you can grind improvements.

This Isn’t the Industrial Revolution, It’s the Automation of Cognition

I’m tired of hearing that this is “just like every other technological change.” It’s not.

Historically, automation has eaten tasks. It took muscle, it took repetitive motion, it took certain kinds of routine clerical work. But AI is coming for something different: it’s coming for the ability to do the thinking that produces the tasks. The IMF has been blunt about what makes AI different: it’s not confined to routine work, it’s exposed across a huge portion of employment, including high-skilled jobs.

And the pace is not theoretical. The UK’s AI Security Institute has published trend data showing major capability jumps across cyber, autonomy skills, and other domains, including time horizons increasing and success rates rising fast over short periods. You do not get to wave that away with “people said that about the loom.”

This is the uncomfortable core: we are automating intelligence itself. Not “a job.” Not “an industry.” The general-purpose capacity to read, reason, decide, and execute across domains.

People Will Not be Smart Enough to do the “New Jobs” Created by AI

This header is deliberately inflammatory as subtlety and nuance is how we lie to ourselves.

Let me give you a crude example of why the “new jobs” created by AI will increasingly be out of reach, no matter how much human job “retraining” is done.

Lets focus on the “transportation” industry. Imagine a pre-tech world where the industry of “transport” is just invented. In this pre-modern age, a person’s job is literally to pick up sticks and bring them back to camp to make a fire. Call that a job that requires an “IQ of 50.” Not as a scientific claim, and not as a moral judgment. Just a shorthand for how much complexity, abstraction, and training is required.

Then the technology ladder starts where new technologies are subsequently invented to increase the productivity of “transport”:

Wheelbarrow: now you need coordination and planning. “IQ 60.”

Wagon pulled by a horse: you need to manage a horse and maintain gear. “IQ 70.”

Automobile: you need to drive, navigate, understand machinery at a superficial level. “IQ 75.”

Autonomous vehicles: the new job is managing fleets, exceptions, maintenance scheduling. “IQ 90.”

Managing the fleet software: now you need to configure systems, write scripts, integrate tooling. “IQ 110.”

Automating the management of the fleet software: now you’re building the automation itself. “IQ 130.”

Again, the numbers are not the point. The slope is the point.

The pattern is: the replacement job usually requires more abstraction, coordination, and general complexity than the job that just got automated. This only makes sense as the new technology that replaces the old technology is always more complex and advanced. As a result, managing newer and more complex technologies will almost always require a higher degree of intelligence, bar a few edge cases. That used to be fine when the level of intelligence required to handle new technologies still fell well below the human IQ band. Now, new technologies are rapidly crossing this human intelligence threshold precisely as a result of AI. And once an AI is better than the median human at the abstraction layer, the “new jobs” will only be accessible to an increasingly small few.To put it more plainly, any new job that gets created will be cognitively out of reach for a growing minority and then majority of people. EVEN in the edge case of new job creation that can still be done by most humans, what is stopping AI from doing these new jobs faster and cheaper?

Why the “Don’t Worry” Narrative Feels Like Gaslighting

There are a few groups of people who, for very different reasons, keep telling you this is fine.

Some of them are AI executives doing what executives do: shaping perception, calming regulators, keeping customers from panicking, and keeping markets from pricing in social instability. I don’t need a conspiracy theory to explain that. Incentives explain it.

Some of them are professional skeptics and contrarian commentators. Skepticism is healthy. It’s also a business model, and business models have gravitational pull.

Some of them are influencers whose income depends on humans continuing to believe that the core of knowledge work, especially coding, will remain a human-only craft if you just grind hard enough and keep learning the right stack.

And then there’s the quiet group that doesn’t post hot takes: the people actually using these tools in real knowledge work. The vibe is not “lol it writes boilerplate.” The vibe is: “This thing is starting to eat the middle of my job, and it’s not slowing down.”

Even the cultural signals are weird. There is a documented trend of wealthy tech leaders and elites engaging in doomsday prepping and bunker narratives. At the same time, it’s also true that prominent figures have publicly denied having bunkers, so I’m not claiming every exec is digging a hole in New Zealand. The point is simpler: when the people closest to the power curve are building bunkers and preparing for a Mad Max world, maybe skeptics should stop pretending that AI will only ever produce “AI Slop.”

The Economy Is Mostly Verifiable Work Wearing a Human Costume

Here’s another reality check. Modern economies are dominated by services. OECD work summarizes the long-run rise of services in global GDP, and World Bank data shows just how services-heavy advanced economies are.

Services sounds like “human stuff,” but services are full of checklists, workflows, compliance gates, and measurable outcomes. The ILO’s work on occupational exposure to generative AI is basically a map of how many jobs contain tasks that can be transformed or partially automated by systems like this. And the IMF’s estimate that around 40% of global employment is exposed to AI should not be read as “40% of jobs vanish tomorrow,” but it absolutely should be read as: the labor market is going to get punched in the mouth.

Also, we have empirical evidence that these tools already move productivity and workflow shape, not just toy demos. There’s rigorous research showing generative AI assistance increased productivity in customer support, with outsized gains for less experienced workers. And controlled experiments have shown large speedups for coding tasks with AI pair programming tools.

If you’re a DevOps person, you know where this goes. The first phase is “assistants.” The second phase is “agents with tools.” MCP exists because the industry is standardizing how models plug into real systems. Once the model can read, write, run, verify, and iterate inside your environment, a huge fraction of what we call “work” becomes an orchestration problem. And orchestration is exactly what these systems are being trained to do.

UBI Is Not a Utopia, It’s the Minimum Viable Patch

I’m not saying UBI is perfect. I’m saying it’s going to be necessary, or something very close to it.

Not because it’s philosophically cute. Because if labor stops being the primary distribution mechanism for survival, you need a new mechanism.

And this is not purely theoretical anymore. We’ve seen real-world experiments with unconditional cash and basic income style programs:

Finland’s basic income experiment (2017-2018) found no major employment effect but did find improved wellbeing and reduced bureaucracy.

Stockton’s guaranteed income demonstration provided unconditional cash and has been studied as a real municipal pilot.

A large unconditional cash study backed by OpenResearch (linked in coverage to Sam Altman) gave participants monthly payments and reported meaningful quality-of-life effects.

You can argue about design details forever. Tax structure. Phase-outs. Regional cost adjustments. Whether it’s UBI or guaranteed income or a negative income tax. Fine. Those are implementation arguments.

The core point is: we need a durable floor that is not tied to having a job, because “having a job” is no longer guaranteed to be a stable property of being a human.

Free Market Nostalgia Won’t Feed People When Labor Is Optional

At the end of the day, no one cares about fancy free market ideals if the market stops buying what most people sell.

The “free market” story assumes that most humans can exchange their labor for money, and use that money to buy goods. But if AI does the labor, and the ownership of the AI is concentrated, then the market becomes a distribution system that pays capital and shrugs at everyone else. You can’t “hustle” your way out of that at scale. You can’t reskill an entire population into the tiny fraction of roles that remain scarce, especially when the frontier keeps moving and the models climb the ladder too.

So here’s the blunt fork in the road. Either we build some kind of radical wealth distribution system that keeps society coherent in a world where intelligence is cheap, or we get the ugly outcomes humans always get when inequality becomes structurally locked in.

That ugly range includes: political violence, social breakdown, scapegoating, and authoritarianism. It includes the rich retreating behind literal and figurative walls. It includes a world where “security” for the rich becomes the growth industry and everyone else becomes a problem to be managed. Think trillionaire’s riding in bullet proof cars with humanoid security guards, trying not to get Luigi Mangione’d. There’s no stable middle ground where the majority is permanently economically irrelevant and everything still stays chill.

UBI is not a feel-good dream. It’s a pressure valve. It’s the minimum viable civilization patch for the labor market crash we are sprinting toward.

And if we refuse to ship that patch, we are all screwed.